Lior Libman of One Hour Translation has released a web tool that you can use to quickly determine if text was translated by one of the three major machine translation (MT) engines: Google Translate, Yahoo! Babel Fish, and Bing Translate.

It’s called the Translation Detector.

To use it, you input your source text and target text and then it tells you the probability of each of the three MT engines being the culprit.

How does it know this? Simple. Behind the scenes it takes the source text and runs it through the three MT engines and then compares the output to your target text. So the caveat here is that this tool only compares against those three MT engines.

Being the geek that I am, I couldn’t help but give it a test drive.

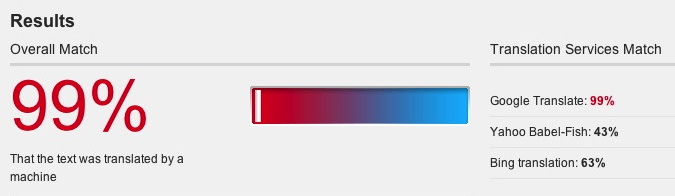

It correctly guessed between text translated by Google Translate vs. Bing Translate (I didn’t try Yahoo!). Below is a screen shot of what I found after inputing the Google Translate text:

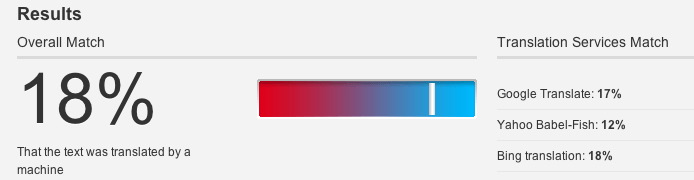

Next, I input source and target text that I had copied from the Apple web site (US and Germany). I would be shocked if the folks at Apple were crunching their source text through Google Translate.

And, sure enough, here’s what the Translation Detector spit out:

So if you suspect your translator is taking shortcuts with Google Translate or another engine, this might be just the tool to test that theory.

Though in defense of translators everywhere, I’ve never heard of anyone resorting to an MT engine to cut corners.

I actually see this tool as part of something bigger — the emergence of third-party tools and vendors that evaluate, benchmark, and optimize machine translation engines. Right now, these three engines are black boxes. I wrote awhile back of one person’s efforts to compare the quality of these three engines. But there are lots of opportunities here. As more people use these engines there will be a greater need for more intelligence about which engine works best for what types of text. And hopefully we’ll see vendors arise that leverage these MT engines for industry-specific functions.

UPDATE: As the commenters noted below, there are limits to the quality of results you will get if you input more than roughly 130 words. The tool is limited by API word-length caps.

The results are apparently dependent on the size of the text. For example, 3 lines of source text and an automated translation show a 0.79 probability (79%) that the text was translated by Google Translate. A 600-word article shows a 0.0 probability (0%) that the text was translated by Google Translate.

However, both texts were translated by Google Translate. The 3-line sample is a subset of the 600-word article.

Thanks Mike — I should have mentioned that length of text is key to the quality of the results.

John, Mike is saying something else entirely: the test *failed* on the longer text and worked on the shorter one. So simply increasing the sample size doesn’t help. My quick examination shows that the test just doesn’t work for anything bigger than somewhere around 130 words (I didn’t bother to chase the exact number down), and that’s what Mike hit.

I ran some tests using a Hungarian text I had personally translated last year using Google Translate as the control. Here are my results (sorry for the kludgy formatting):

Sample size (words) / MT probability (Human translation) / MT probability (MT sample)

690 / 0% / 0%

366 / 0% / 0%

173 / 0% / 0%

150 / 0% / 0%

129 / 5% / 87%

110 / 4% / 84%

83 / 10% / 89%

14 / 38% / 100%

5 / 52% / 100%

On the shortest text (a chapter title) it had 100% confidence on Google translate for the MT version, but it gave a 52% chance for Google Translation and a 50% change for Bing Translate. In other words, it gave slightly better than even odds I was a machine. (I don’t blame it here since the text was so short.)

When I upped the words a bit to 14 (from running text) it still pegged Google Translate at 100% and the probability that I was a machine started to fall (although it still gave better than one in three that I was machine).

What I find interesting is that for longer MT texts it actually got *worse* at pegging the MT (dropping as low as 84% for 110 words), so upping text length doesn’t necessarily help it in identifying MT. What increasing the length does seem to do is decrease the false positive rate for actual human translation. So it’s doing a better job at detecting human translation than at detecting MT.

As an additional test, I did a quick post-editing of some Google Translate output with a source size of 78 words. Just the quick post-edit (which was tricky and would have been out of reach for someone who didn’t know the source pretty well) dropped the probability to 18%, even though the text remained extremely stilted. What that says is that this approach probably won’t detect it when translators use MT as the basis for their output.

But no matter what we’re testing, we hit the magic wall somewhere around 130 words and it just stops working. This is what Mike hit and it is a fundamental limit that the site developers ought to mention…

I should add to the above that with the post-edit sample, my two-minute edit took the probability of MT on the 78-word sample from 100% to 18%.

(One other note, aside from the post-edit test, the MT results were all completely unedited in any way.)

So this is an intriguing idea, but ultimately I’m not sure it can do what it claims to do if even minimal post-editing is involved (and if your translator is giving you unedited Google Translate and you don’t know that, you have bigger process problems).

And the length limit is a real killer to finding out how well it works for detecting anything where you should be able to get at certainty.

Hi Arle — Thanks for the analysis! I’ve asked Lior to chime in.

My initial thoughts are either that the MT APIs are capped in terms of word length or that the comparison engine is capped (I’m leaning towards the latter). But you’re right on — the web page should note this text limitation.

Hi John, Mike, Arle,

Interesting this came up. Both Google and Bing Translators documentation says that there is a limit of up to 2000 chars (including special chars, spaces etc.), I would say around 300 words in total. This is due to the design of the Google and Bing APIs which basically goes through the URL. We are currently working to find a workaround that will enable

As Arle mentioned, this is exactly why Mike’s tests have failed. I’m not sure if the wall is at 130 words but this really depends on special chars, formatting etc.

I’ll be happy to update you if we somehow manage to resolve this issue as it will give much more power to the translator detector although from my experience, checking the fist page or so already gives you a very good feeling of the nature and quality of the translation.

Hi All,

Indeed we are struggling with Google’s and Bing’s API limitations. The new version will hopefully workaround those issues by splitting-translating-uniting your texts, but some inaccuracy is inevitable in such situations. It will definitely be most accurate to test texts no more than 1500 chars (and not too short as well!)

If that’s not enough, there seems to be a quota limitation on Google translation API (Grrr…), which completely shuts down the google detection feature after a while.

We are resolving this issue with google at the moment.

Lior, thanks for the response. I think it probably is the character limit that we hit (I didn’t check character counts in what I did).

I would guess that you’re right: checking the first page would give you a good idea. It would be interesting to run some more post-editing tests to see what happens. I personally don’t have a problem if a translator wants to use Google Translate and then post-edit the results into shape (as long as the translator knows enough to actually do the post-editing job!), but I know some companies/individuals care a lot about this issue, and the ability to detect post-editing might be useful to them.

I think your idea is really interesting. Out of curiosity, what do you think of my observation that it actually seems to be better at detecting human translation than machine translation (at least based on my non-statistical analysis)? That kind of surprised me, but I think that’s actually a more useful metric.

It’s also interesting to me that they aren’t inversely related: it can be really good at telling you that human translation is human translation, but not quite as good in telling you that MT is MT for sure. That tells me that there is something interesting going on and that there is something special about human translation…

Arle, thank you for clarifying my initial comment.

I used Google Translate to translate English to Norwegian. With a 61-word source text, the results are as follows:

* Google Translate: 25%

* Yahoo Babel-Fish: 0%

* Bing translation: 52%

Lior, why does Machine Translation Detector show that the translation is twice as likely to come from Bing as from Google? Possibly, you can include some background information on the website that explains how texts are evaluated. (I do not mean to belittle your software. As John wrote, we need tools such as Machine Translation Detector.)

That’s a neat idea. But the big question of course is, what algorithm exactly are they using to compare the two translations?

Mike,

Could you please send me the text you used?

I’d be happy to run some tests on it and make so fine tunes to our detection algorithm.

If you don’t like to post it, let me know and I’ll share my contact.

I will surely share our detection algorithm here and on the website later today

English source text: http://www.international-english.co.uk/clear-english-gives-good-machine-translation.html

Norwegian translation: http://www.international-english.co.uk/mt-evaluation-en-no.xls

* The Fluency worksheet contains the Norwegian translation (as sentence-level segments).

* The Accuracy worksheet contains both the Norwegian translation and the English source.

Spanish and Welsh translations are also available. See the links in the sidebar on the English source text.

If you have questions, feel free to contact me (www.techscribe.co.uk/techw/contact.htm).

Well, the real question, I think, is what kind of a relationship do you have with your translators that pushes you to resort to this? And what kind of third rate translators do you use that would resort to using MT without any post editing without telling you and pretending it is their work.

Results say “xx% that the text was translated by a machine”. Are they in fact saying “the machine translation matches the source to xx%” ?

If the machine translation was a good one, and the supplied translation was also good, wouldn’t that lead to false positives? Especially in more technical pieces.

Mike,

I was playing with the text you posted I’m wondering which text exactly you used (the 61 words text).

I was testing several pieces of text from your post and I can imagine that the Google translate is changing with time, your text is from 2009. If you take the original text you have, translate it with Google today and then run the test again I guess you will get different results.

again, thanks for the feedback and I’d be happy to get more.

I did some experimenting with a French to English text (96 words in French). Here are my results in detail:

http://best4edu.com/PDF_Files/MT%20Detector%20Analysis.pdf

Lior,

For the 61-word text, I do not remember which text I used. I started with the full article, and then removed text.

@Adriana: I don’t think most of us are worried about anything in specific. Most of us are geeking out a little about the idea: it’s interesting and worth playing around with. For instance, I would be very curious about whether good post-editing removes the MT “signature”: that might help dictate certain kinds of business decisions and workflows, quite independent of whether we have first-rate or twenty-seventh-rate translators.

It’s more of a question of interest (at least for me) than any sort of worry. (As I mentioned, if you aren’t detecting unedited MT some other way, you have bigger process problems than this can address.)

@Chris. I think that’s a really interesting question. As I noted, it seems the process is better at detecting human translation than at detecting MT, which suggests that as the quality improves, the rates would probably do some interesting things. I’m not sure what, but the relationship between accuracy of false positives on human translation and false negatives on MT doesn’t seem to entirely correlate.

Most Publishers and LSPs I meet are complaining about NOT being able to get their translators to use MT, not the other way around!

@Arle I thought it was a bit of geeking around 🙂

But to answer your question: Yes Post Editing certainly can take away the “machine translation” feel of such a result. And that is why simple machine translation is good for content that does not carry your company brand voice, (like chats, emails, just to gather the “gist” of things), while machine translation with post editing is much better for content that has a certain brand voice (like product documentation, HR documents) to improve quality, yet still save on time and cost; and finally, noting beats human translation for superb quality when you are talking about marketing material, the content that represents a company’s brand, its voice.

I’ve made a human translation and when I checked it with the detector I found that results matched 11% that it was made with a MT. What does it mean? Please comment.

I have only run into one case in three years of a translation that was so bad that I suspected it was MT. I think that MT can be a useful check on your supposition about how to translate something provided that you know what you are doing, and I think automatically ruling it out in every situation is counterproductive. (e.g. the translation I got used intestinal languages for gut strings, in an article about music). I also recall that when my son did a paper for Spanish class, the teacher sent it back with a “gotcha” because there was a 30%+ coincidence with some websites and even more with papers sent in by other students on the same topic, even though he hadn’t used those websites or collaborated with other students. I told her that this wasn’t surprising but it wasn’t a cause for alarm anyway. I would suspect that using MT as a checkup of a translation that was done entirely without MT would show a considerable coincidence, even though the person doing the translation didn’t use the MT to start with.

Paul is right, there is nothing wrong with the controlled use of MT. I’ve got once a manual written by the Chinese engineer in English and there was a not inteligible sentence. There was a Chinese text too (I do not know Chinese), so I could copy a corresponding fragment, put it into MT, and the translation was clear!

To check the performance, I used a currently translated, hotel-tourism, copy writing related text. The full text, 1400 words, showed the following result: Bing 5%, the others 0 %. I entered the same text, but only 200 words, the results shown. Bing 10%, Bablefish 3%, Google 0%

As already discussed: the shorter the text the more probability that „involving MT“ will be shown.

Probability will increase if you are entering technical documentations, repetitive texts and so on, which leads to the question: does it make sense to use MT’s, let’s say, for a first draft? Can I remove the „typical MT“ (look;-)) and feel?

Use for a first draft: I tried it – in my opinion, it makes no sense. It’s very time-consuming. If I am doing a translation, I usually, and if time is not an issue, do always a first draft. It means: I am hammering, machine-gun like, the text into PC/TM, regardless of sense, typos, etc. Then I leave it for one day. Now, it’s time for post-editing;-). Crucial, at least for me and like in chess: („the Kiebitz sees more“), I act as I am not the one involved.

The difference between these two approaches: The first way, using MT, I am „not in the text, not in the idea (which should be) behind“ and so on. Therefore, it’s time-consuming, painful and nothing to enjoy. The second way, „acting like a MT“, hammering the text into…I am „in the text“ from the beginning, regardless of typos, sense/nonsense. Then, post-editing is fun and interesting.

Can one take away the MT feeling?: If it is a MT, and not as described acting like MT, you can’t. Some months ago I got an invitation, and since I was bored, I tried it out. Google delivered in five seconds kind of a translation. Then I started post-editing, and I think I did it well. The result, some weeks later: High probability of MT-Use…

No difference if you use Systran (I tried it out. I thought it could boost my productivity, and soon de-installed it), Babelfish or whatever. The point, for me, is always the same: in order to train the machine to a level where the output can be used „easily“ is too time-consuming. It will take some years.

Use of MT and post-editing: it’s more widely used as one might think. I worked for some months for a very big company offering translation only for tourism-related, copy writing texts. They were looking for post-editors. First, I had to carry-out a text. They graded from 1-5 and I had been instructed that in order to pass a „3“ is sufficient! I passed and subsequently received texts for post-editing (the post-editors had the choice of workloads: from 1000 – 10000 per day!!). They all were MT‘s, most of them in a quality baffling description. Then, the company stopped work. Why ?!;-)

By the way: if I have a text which could be used for a first MT draft – then it’s more favorable to use your (a) TM!